Alibaba ATH Innovation Division

Happy Oyster

Create Interactive 3D Worlds in Real Time (2026)

- Re-explorable 3D output instead of one-shot video

- Two distinct authoring modes (Directing + Wandering)

- Native audio-video co-generation in a world model

Alibaba ATH Innovation Division vs ByteDance

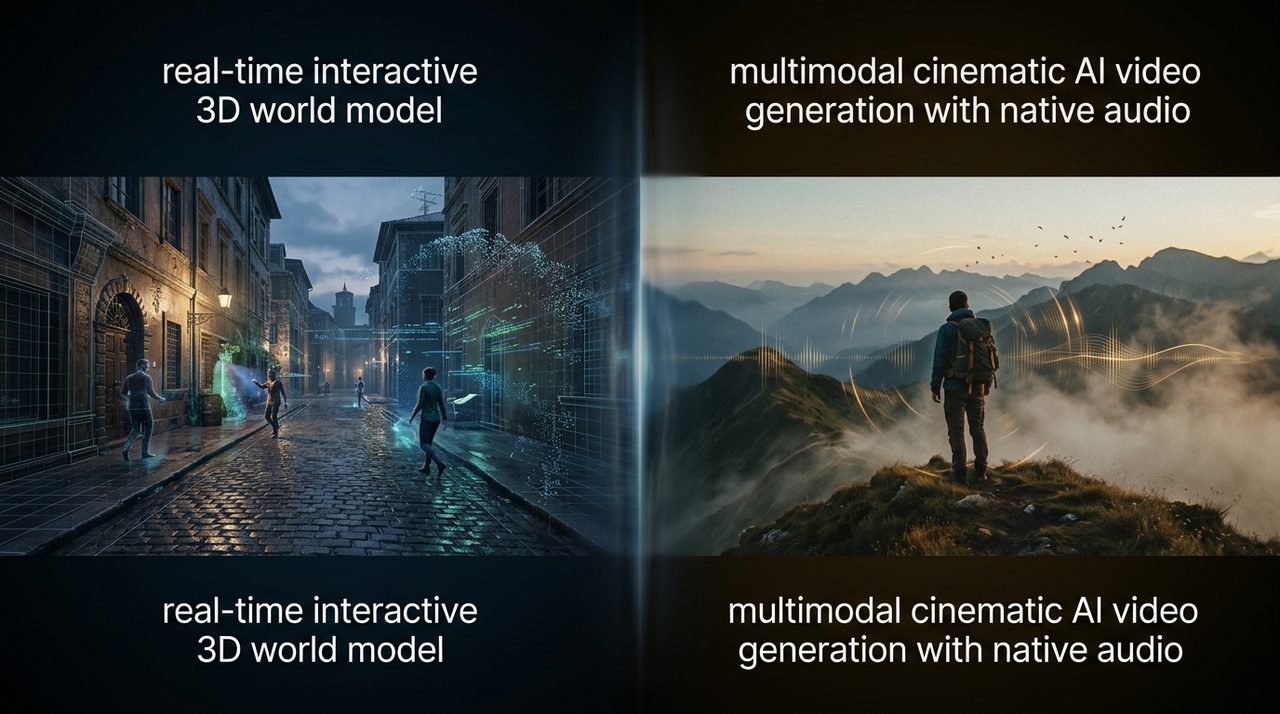

Happy Oyster (Create Interactive 3D Worlds in Real Time (2026)) compared to Seedance 2.0 (Multimodal cinematic AI video generation with native audio.).

Happy Oyster and Seedance 2.0 target adjacent jobs but take different approaches. This page compares them side by side on output paradigm, access, capabilities, and positioning — based on vendor-stated claims as of 2026-04-20 / 2026-04-21.

Alibaba ATH Innovation Division

Create Interactive 3D Worlds in Real Time (2026)

ByteDance

Multimodal cinematic AI video generation with native audio.

| Dimension | Happy Oyster | Seedance 2.0 |

|---|---|---|

| Modality | 3d-world, interactive, audio-video | text-to-video, image-to-video, video-to-video, audio-to-video |

| Release status | early-access (2026-04-16) | public (2026-02-12) |

| Capabilities | Directing Mode · Wandering Mode · Native Audio-Video Co-generation · 3D World Generation | Native Audio Generation · Multimodal Reference Mixing · Scene Extension and Editing · Multi-Shot Storytelling |

| Output type | Interactive 3D world (not pre-rendered video) | — |

| Modes | Directing + Wandering | — |

| Audio | Natively co-generated with visuals | — |

| Access | Limited early access (April 2026) | — |

| API availability | Not publicly documented | — |

| Pricing | Not announced | — |

| Game-engine export | Not confirmed | — |

| Maximum Duration per Shot | — | 15 seconds |

| Output Resolution | — | 1080p (Full HD) |

| Max Input Assets per Generation | — | 12 items |